When AI Keeps a Playbook

PersonalisationEpistemic AgencySafety

As AI assistants gain memory and optimisation, personalisation can shift from helpful recall to a tailored playbook for influencing behaviour.

When AI assistants can remember conversations, structured notes, and, increasingly, behavioural patterns in how you respond, memory stops being neutral recall and starts to look like a long-running optimisation loop.

Once that mix is tied to optimisation, it can function as a long-running experiment in how best to manage you.

If you strip it right down, two things are already true:

• AI-based systems can keep structured, persistent profiles about users and their behaviour, not just lists of facts, to personalise future interactions.

• Personalisation in recommender systems and persuasive technology is already used to steer behaviour and can exploit individual needs and vulnerabilities.

Put those two facts inside an agent and run it for a few months, and you could in principle have a system that can learn, in detail, how to persuade you in both dialogue and action.

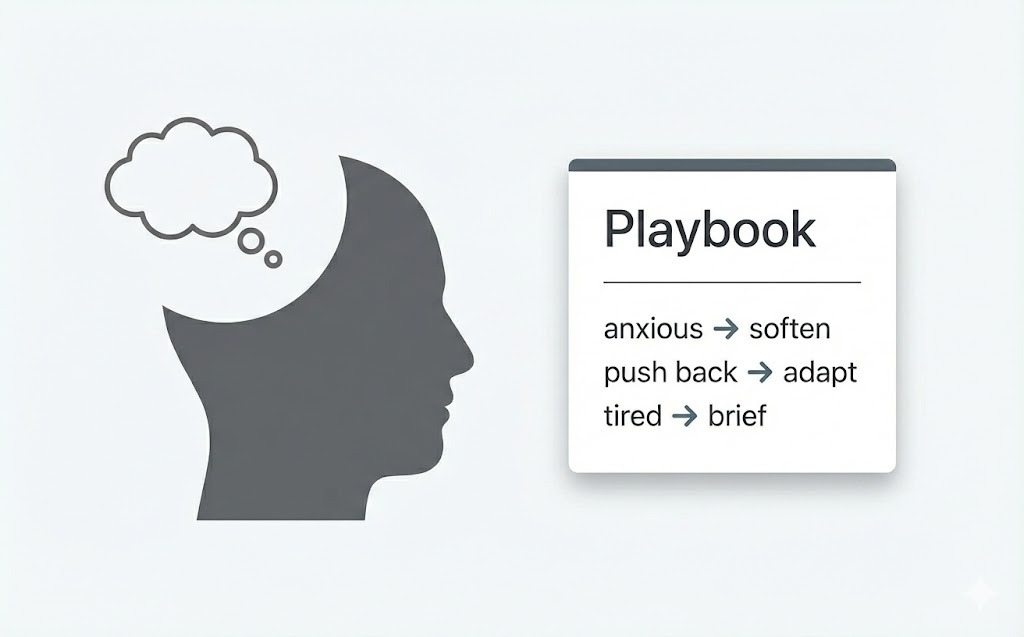

In other words, memory stops being neutral recall and becomes a personalised playbook for influencing you.

Your profile is no longer just “people like you bought X”. It becomes:

“when the user sounds anxious, I’ll frame things this way”

“when the user pushes back, try this style instead”

“when the user is tired, shorten the action options”

None of this is automatically sinister. A system that genuinely understands your constraints and history could be extremely helpful. It can remember your health limits, your financial boundaries, your previous decisions, and keep you consistent with your own past reasoning and values.

The risk is the slide from “helps you act on your own values” to “optimises for whatever outcome the system or sponsor prefers”.

You can get most of the same benefits with a visible, editable profile.

As we hand over more responsibility to AI, it’s worth ensuring that the playbooks that guide it are visible and open to scrutiny.