Nested AI - A New Paradigm For Continual Learning

WorkflowsContext EngineeringGovernance

Google research proposes “Nested Learning”, where different parts of a model adapt at different speeds after deployment, reducing catastrophic forgetting while preserving stable reasoning.

Nested AI

Google’s recent research directly addresses one of the biggest, most criticised limitations of current models, catastrophic forgetting.

Most large language models are trained once and then fixed. They can be fine-tuned on new examples but this is slow, and even small updates may change behaviours that were already working well. A system can store local context, so responses feel consistent, but that does not change the model itself.

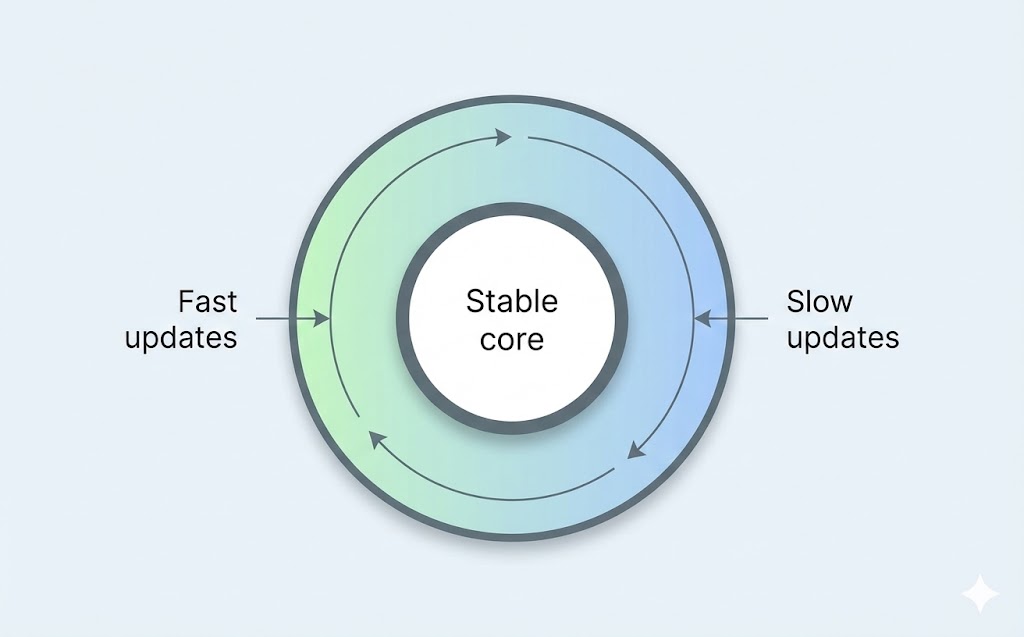

'Nested Learning' proposes allowing different parts of an AI model to learn at different speeds after deployment.

Some parts update quickly to reflect new input.

Others change only with repeated evidence, which protects stable patterns of reasoning.

The model adapts its own internal parameters over time. It can absorb new patterns of behaviour, not just new facts, without retraining the whole system or changing what is already reliable.

This is very similar to how humans learn.

This is early-stage work, but the potential is enormous. Models that dynamically reflect real-world changes, while keeping core competencies stable, could fundamentally change how we deploy and maintain AI systems.

Source: Google Research: https://research.google/blog/introducing-nested-learning-a-new-ml-paradigm-for-continual-learning/