Agentic Context Engineering (ACE)

AgentsContext EngineeringWorkflows

ACE is a method for making agents learn from experience by improving their context and playbooks, rather than retraining the model.

An approach that lets agents learn from experience without retraining.

The approach is known as Agentic Context Engineering (ACE).

How it works:

• After each run, a “reflector” agent distils experience into a short, reusable lesson.

• Lessons are stored in a structured playbook.

• On the next run, a “curator” agent retrieves relevant lessons and injects them into the prompt.

• Lessons that help are reinforced. Those that don’t are pruned.

The model itself never changes.

Store → Retrieve → Reinforce/Prune

Unlike Retrieval Augmented Generation (RAG), this isn’t about retrieving facts to ground a response. It’s about retrieving strategies to shape behaviour.

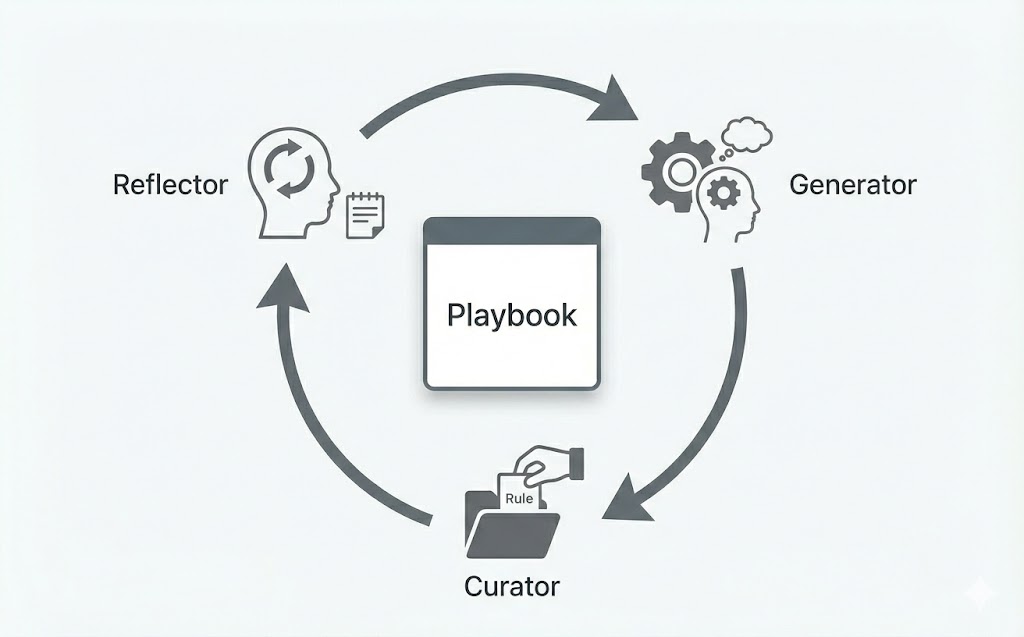

ACE proposes a modular, three-step agentic loop:

Generator

This is the primary “doer” agent. It attempts to complete a given task using the current version of the playbook for guidance.

Reflector

This is the “coach” agent. After the Generator finishes (or fails), it identifies why a failure occurred or what led to a success, and it distils this into a concise, actionable lesson.

Curator

This is the “archivist” agent. It takes the lesson from the Reflector and integrates it into the playbook as a new, structured entry.

Think of it as the agent writing its own operator’s manual, one task at a time.

Is it a good idea to have an agent defining its own rules?

Paper: Agentic Context Engineering: Evolving Contexts for Self-Improving Language Models

https://arxiv.org/abs/2410.04618